Reduce speaker attribution errors

Agent vs. customer turns, correctly tagged; even on overlap, hold music, IVR carryover, and three-way calls.

Make voice agents production-resilient

Real calls are messy. Our models are built for the actual conditions of contact center audio, not benchmark recordings.

Power QA scoring and analytics that actually work

Talk-ratio, sentiment-per-speaker, agent coaching: all of it depends on knowing who said what. Get that right, and everything downstream works.

Plug in beneath your existing stack

Works alongside any STT, any LLM, any voice agent framework. We don't replace your stack, we make it functional.

Use cases

Voice Agent input layer: Real-time speaker attribution so your agent acts on the right turn

Conversation analytics: Agent vs. customer separation for QA, coaching, and CSAT analysis

Compliance & Call recording: Speaker-attributed, timestamped records for regulated review

Agent assist & coaching: Per-speaker insights surfaced live for supervisors

Voice agent evaluation: Measure turn-taking, latency, and consistency in production agents

Features

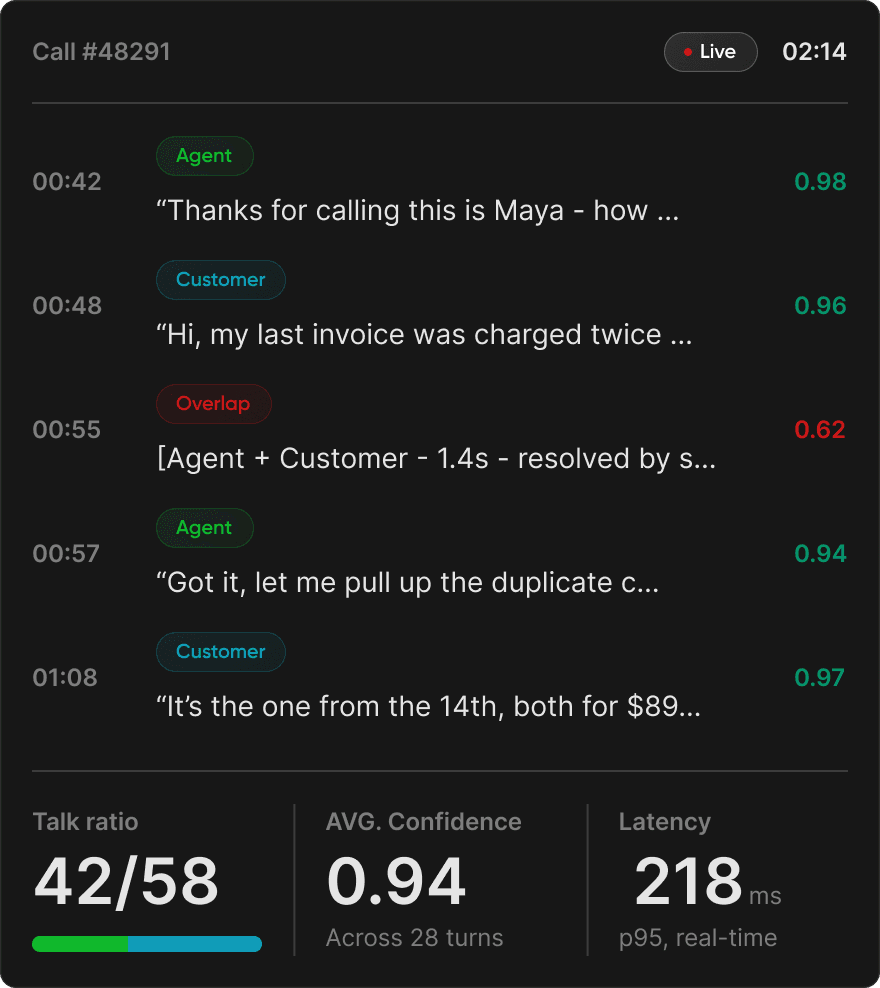

Real-time diarization

Sub-second speaker attribution for live agent assist and voice agents.

Overlap & interruption handling

Tag who's talking when conversation overlaps.

Speaker identification

Tuned your models to recognize your contact center agents.

Confidence scoring

Flag uncertain turns for human review or fallback logic.

Enterprise-grade reliability

Built for the SLAs your customers expect.

hours processed

languages supported

latency for real-time workflows